Higher Education Beyond 2030: Principles, pedagogy, and the people we keep leaving out

In mid-late March 2026, I attended a session exploring the future of higher education, structured around the recently published UNESCO roadmap Transforming Higher Education: Global Collaboration on Visioning and Action (UNESCO, 2026). The room brought together academics at every career stage, from doctoral researchers to full professors, and what struck me most was not any single argument made but the collective mood: a genuine appetite for transformation sitting alongside a sober recognition of how wide the gap remains between the sector's stated values and its daily practice.

I am both a doctoral student and a university staff member, and I experience that gap from both sides simultaneously. This post is a reflection on that session and on the roadmap itself. A companion piece follows, which takes the same arguments and grounds them in the specific material conditions of Glasgow, where I work and study. What I want to do here is make the principled case about digital access, pedagogy, and whose knowledge counts, and be direct about what that case asks of educators, researchers, and policymakers.

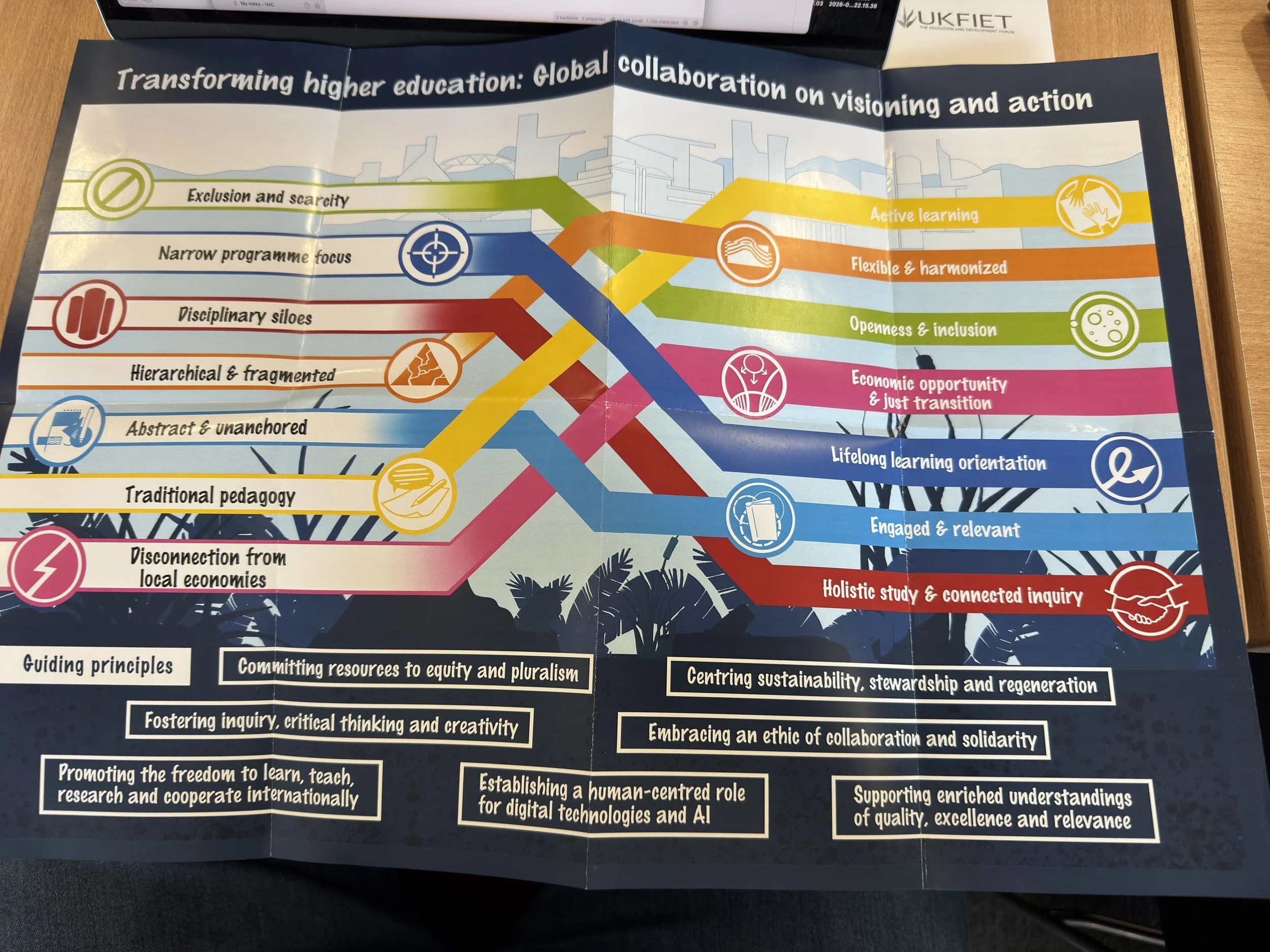

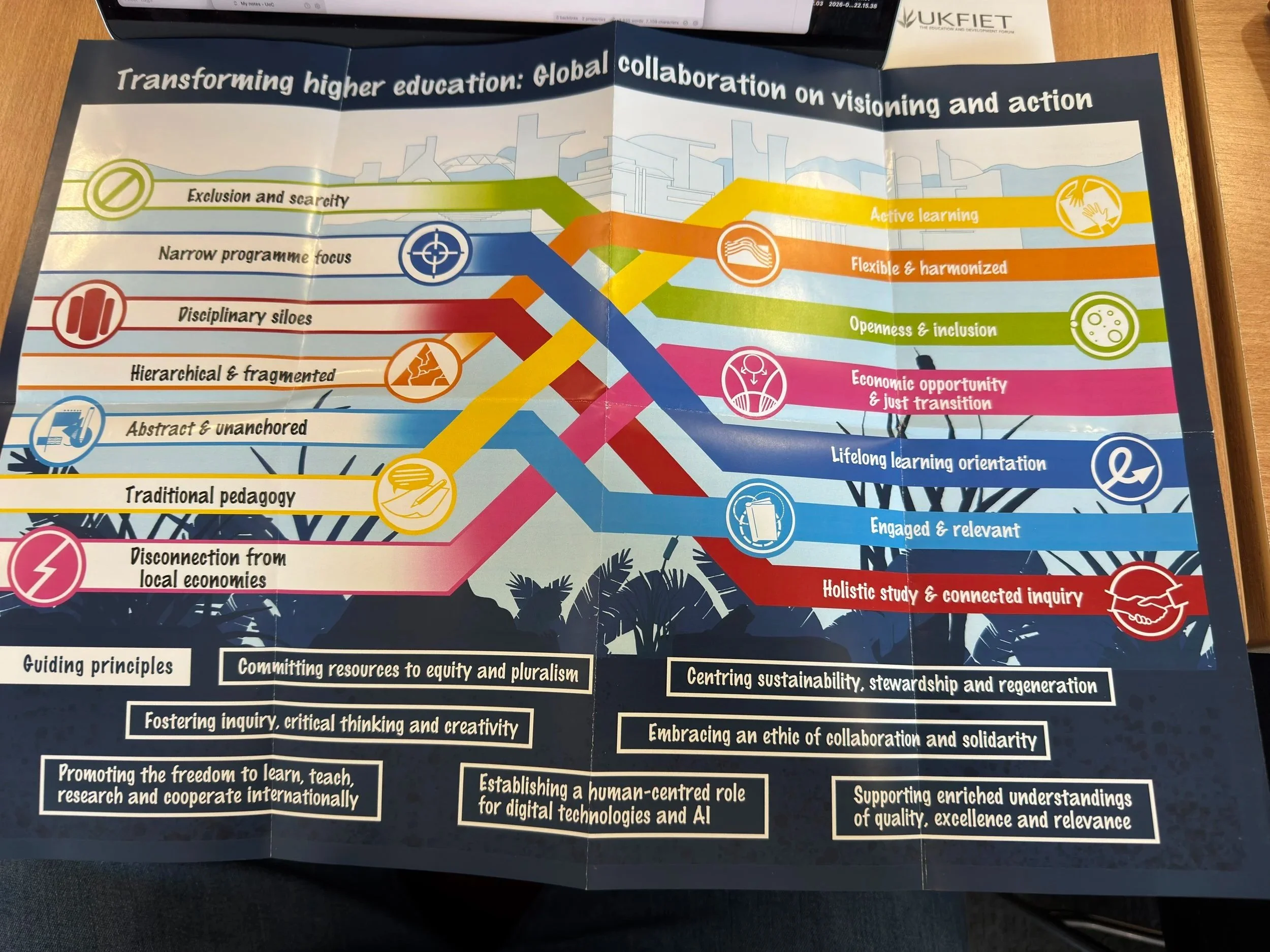

The visual from UNESCO (2026) uses a ribbon/flow diagram to map a transformation from current problems in higher education (left side) toward desired future states (right side):

Current challenges (left): exclusion and scarcity, narrow programme focus, disciplinary siloes, hierarchical and fragmented structures, abstract and unanchored learning, traditional pedagogy, and disconnection from local economies.

Transformed vision (right): active learning, flexible and harmonised systems, openness and inclusion, economic opportunity and just transition, lifelong learning orientation, engaged and relevant curricula, and holistic study and connected inquiry.

At the bottom, seven guiding principles anchor the framework: committing resources to equity and pluralism; fostering inquiry, critical thinking and creativity; promoting freedom to learn, teach, research and cooperate internationally; centring sustainability, stewardship and regeneration; embracing an ethic of collaboration and solidarity; establishing a human-centred role for digital technologies and AI; and supporting enriched understandings of quality, excellence and relevance.

Guiding principles to reshape the future of higher education (UNESCO, 2026)

The roadmap proposes seven guiding principles that are interlinked and mutually reinforcing:

Committing resources to equity and pluralism.

Promoting the freedom to learn, teach, research and cooperate internationally.

Fostering inquiry, critical thinking and creativity.

Establishing a human-centred role for digital technologies and artificial intelligence

Embracing an ethic of collaboration and solidarity.

Centring sustainability, stewardship and regeneration.

Supporting enriched understandings of quality, excellence and relevance.

The document and what it is asking of us

The UNESCO roadmap is the outcome of a remarkable consultative process: over 15,000 participants, more than 1,500 comments on a draft roadmap, and 250 knowledge products submitted from across the world. It sets out seven guiding principles and a set of lines of transformation intended to move higher education toward what it calls a new social contract. The principles call for committing resources to equity and pluralism; promoting the freedom to learn, teach, research, and cooperate internationally; fostering inquiry, critical thinking, and creativity; establishing a human-centered role for digital technologies and AI; embracing an ethic of collaboration and solidarity; centering sustainability, stewardship, and regeneration; and supporting enriched understandings of quality, excellence, and relevance (UNESCO, 2026).

What the session made clear is that many people working in higher education find these principles genuinely compelling. The hunger for a more collaborative, more inclusive, and more epistemically honest sector is real and broadly shared. What is less clear is whether institutions, as opposed to the individuals within them, are prepared to act on these principles when doing so would cost something: revenue, convenience, prestige, or the comfort of familiar ways of working.

Digital access as an equity question, not a technical one

The roadmap is explicit that enriching higher education with "the possibility to study online and/or in hybrid formats would open higher education to more diversified learner motivations and interests, as well as to those who pursue it alongside full-time employment or care work" (p. 41). It also recognizes that "students can learn in different settings and spaces, whether that be in workplaces, in communities, or different cultural settings" (p. 41).

These are not technical observations about learning management systems. They are equity arguments. And yet across the sector, a counter-movement is underway. Universities that expanded hybrid and online provision during the pandemic, often discovering in the process that engagement did not collapse and that participation from previously excluded groups increased, are now reverting to in-person-only defaults. The rationale is rarely made explicit. When it is, it tends to appeal to the value of campus community, the richness of in-person dialogue, or concerns about student isolation. These are not trivial considerations. But they are being invoked selectively, in ways that consistently favor the preferences of those for whom in-person attendance is easy over those for whom it is costly or impossible.

The roadmap's call to move from "a scarcity and exclusion mindset to an openness and inclusion paradigm" (p. 35) applies here directly. Decisions about session formats, whether a seminar, a meeting, a public lecture, or a research event is offered in hybrid form or in-person only, are not logistical defaults. They are choices about whose participation the institution is prepared to resource and whose it is prepared to make contingent on circumstances that are not equally distributed. For educators, this means thinking carefully about the assumptions embedded in format decisions that are often made without much thought at all. For policymakers, it means recognizing that guidance on hybrid provision, and the resourcing to support it properly, has not kept pace with the rhetoric of inclusion.

Collaborative assessment and the gap between what we teach and what we test

The roadmap calls for pedagogical approaches to move away from "traditional listen-and-repeat methods" and toward active, problem-based, and project-based learning. It argues that "significant learning experiences often begin with a genuinely felt problem motivating the learner" and that student-centeredness means "involving learners in their own learning, so they are the ones making connections and shaping meaning" (p. 45). The document describes the overarching aim of higher education as building "collective and individual capacities for facing our common challenges together" (p. 29).

The conversations in our session pointed to strong agreement with this direction. And yet the dominant model of assessment in higher education remains resolutely individual. Students may be invited to collaborate in seminars, workshops, and project groups. But when grades are assigned, it is nearly always the individual who is evaluated. The group is, in practice, a scaffold for solo performance.

This is not a minor inconsistency. It sends a clear signal to students about what the institution actually values, regardless of what it says about collaboration, communication, and citizenship. If we believe, as the roadmap argues, that higher education's purpose is to build people who can face shared challenges together, then assessing them only as isolated individuals is a structural contradiction at the heart of the enterprise.

The objection that collaborative assessment is difficult to do fairly is real but insufficient. These are design problems, and they are solvable. What they require is institutional will: the willingness to invest in assessment literacy among staff, to create conditions for genuine pedagogical experimentation, and to accept that the discomfort of change is not a reason to preserve a model that is increasingly misaligned with the capabilities higher education claims to develop. For researchers, this is an area where practice-based educational research can make a direct contribution. For policymakers, it is an area where quality frameworks and professional standards could do more to reward innovation in assessment design rather than defaulting to the legibility of individual grades.

Decolonization and the question of whose knowledge counts

Perhaps the most resonant theme of the session was the need to take seriously the decolonization of knowledge: not as a metaphor, a branding exercise, or a curriculum add-on, but as a fundamental rethinking of whose ways of knowing are recognized, valued, and built upon in higher education.

The roadmap is direct about this. It acknowledges that "the claims of local and indigenous knowledge systems are increasingly prevalent, with voices from the global south dismantling knowledge gatekeeping" (p. 17), and calls for universities to engage with "plural forms of knowing as these are practiced by various communities around the globe" (p. 23). In its sixth guiding principle, it calls for research and scholarship to be "democratized, decolonized and disseminated to serve the common good" (p. 31). It argues that universities must go beyond respect and tolerance to ensure that "heterogenous ways of knowing and being become a welcome and respected foundation for building futures together" (p. 23).

For educators, this demands more than adding readings from the Global South to an otherwise unchanged curriculum. It requires examining the epistemic assumptions embedded in how disciplines are structured, what counts as rigorous methodology, which citations carry authority, and whose theoretical frameworks are treated as universal while others are marked as regional or merely applied. These are uncomfortable questions for many established academics, precisely because they put the foundations of expertise under scrutiny rather than merely its contents.

For policymakers, the decolonization agenda has implications for hiring practices, research funding priorities, quality assurance frameworks, and the governance structures of universities themselves. Institutions that talk about decolonization without addressing who sits on their hiring panels, whose research agendas attract institutional investment, and how their quality metrics are constructed are engaging in a form of performativity that the roadmap is trying to move us beyond.

Crucially, as I argue in the companion piece, epistemic justice and material justice are not separable. The question of whose knowledge is centered in a curriculum cannot be fully addressed while the question of who can afford to show up to engage with it remains unresolved. Universities are simultaneously asking people to think more expansively about knowledge while making the conditions of intellectual participation materially harder for precisely the communities whose perspectives the curriculum most needs.

A note on institutional culture and the cost of belonging

There is a question worth putting to any institution that claims to take equity seriously: what does it cost someone to spend a day on your campus? Not in tuition or fees, but in the accumulated small expenditures, transport, food, a hot drink, that constitute the texture of belonging. The answer varies considerably across institutions and across the sector, and the variation is not random. It tends to reflect how seriously an institution has thought about whose comfort and whose finances it has designed itself around.

For policymakers and institutional leaders, this is worth attending to. The grand language of transformation in documents like the UNESCO roadmap finds its test not only in curriculum reform or strategic plans but in the daily, material conditions of the people the institution is supposed to serve. I take this up in much more concrete terms in the companion piece, which looks specifically at Glasgow.

Calls to action

For educators: examine the assumptions embedded in your default practices. When you schedule an in-person-only session, ask who that decision excludes and whether the exclusion is pedagogically justified. When you design an assessment, ask whether it tests the capabilities you claim to value or merely the ones that are easiest to grade individually. When you design or teach a course, ask whose knowledge its theoretical foundations are built on and whether that foundation is as universal as it has been presented.

For researchers: the gap between the transformative agenda the UNESCO roadmap describes and the practices of actual institutions is a rich and urgent site for educational research. Work that documents the equity impacts of hybrid provision decisions, evaluates collaborative assessment models, and traces the relationship between material precarity and epistemic participation is directly actionable. It does not need to wait for the sector to catch up. It can help create the conditions for it to do so.

For policymakers: the seven principles in the UNESCO roadmap are only as meaningful as the frameworks, funding mechanisms, and accountability structures that support them. Guidance on hybrid provision, investment in integrated transport where universities are anchor institutions, reform of quality frameworks to reward pedagogical innovation, and serious attention to student financial support are not peripheral concerns. They are the infrastructure on which the new social contract the roadmap calls for either stands or falls.

The roadmap closes with the observation that "transforming higher education will always be an iterative, ongoing, multilateral and intergenerational process" (p. 55). That is true. It is also, if we are not careful, a way of making peace with the distance between vision and practice. The question is not whether transformation is possible. It is whether we are prepared to begin it, seriously, now.

A companion piece, focusing on what these arguments look like in the specific context of Glasgow, its housing emergency, its fragmented transport system, and the daily material conditions of the students and staff who make up its universities, follows shortly.

References & further information

UNESCO. (2026). Transforming higher education: Global collaboration on visioning and action.

Launch event for the roadmap

A snapshot of what the document is about

Listening, Speaking, Learning: On Verbal Feedback and (Re)Humanizing Assessment

Sunset over Queens Park pond, Glasgow, UK

Recently, I listened to a podcast from 2022, Educatalks: Reflective Practice featuring Professor Melaine Coward, a professor of medical education reflect on her career and her commitment to reflective practice. Medical Educatalks is a podcast created by the Developing Medical Educators Group (DMEG) at the Academy of Medical Educators. Toward the end of the conversation, she described her decision to give students verbal feedback on their assessments. The interviewer sounded genuinely surprised, he hadn’t encountered that approach before.

I paused.

Not because it felt novel, but because it felt familiar.

In 2015, while teaching at a University of London institution, I experimented with providing verbal feedback on written assignments. At the time, our digital marking platform enabled tutors to attach audio recordings directly to students’ scripts, so feedback could be posted alongside the written work itself. Students could either listen asynchronously or book a short follow-up slot to discuss it further. I would have their script in front of me as I recorded or spoke with them, talking through strengths, misunderstandings, and next steps. It was dialogic, immediate, and relational, but it was not universally welcomed.

The pushback was swift and couched in procedural language:

How could this be standardized?

How could it be moderated?

Where was the audit trail?

Ironically, the digital system did generate an artefact. The audio file was stored alongside the script. There was a record. And yet the discomfort persisted. What seemed to trouble colleagues was not the absence of documentation, but the presence of voice, with its tone, inflection, and spontaneity. Feedback had become less easily reduced to a static text block. The underlying concern was not simply technical. It was cultural. Feedback, in this framing, was not primarily a pedagogical encounter, it was a compliance mechanism.

Listening to Coward years later, I realized something I could not fully articulate back then: verbal feedback is not merely a technique. It is an epistemological stance. It is a small but meaningful act of (re)humanising assessment.

“But what I found was, so they weren’t reading the comments that I’d spent ages putting on marking there, because I do spend time, it matters that I give good feedback. When I did recorded feedback, I found I had a lot more follow-up from students, because they had had to listen to my feedback, and it was a very clear message of, I really enjoyed this, something for you to think about. I would be quite structured in how I recorded it, so I had notes, so it was formulaic in that sense, but not rehearsed.”

Feedback as encounter, not transmission

Higher education assessment cultures are deeply shaped by what Paulo Freire famously critiqued as the “banking model” of education in Pedagogy of the Oppressed. In that model, knowledge is deposited; feedback becomes a written correction of deficits; learning is framed as remediation.

Written feedback, of course, can be thoughtful and transformative. But it often operates within systems that prioritize defensibility over dialogue. Comments are calibrated for external examiners. Language becomes cautious. Tone becomes formal and neutralized. The student becomes a case.

Audio feedback, even when delivered asynchronously through a digital platform, subtly shifts that dynamic. Students hear emphasis. They hear encouragement. They hear uncertainty where appropriate. Meaning is shaped not only by what is said, but how it is said.

And when audio is paired with optional follow-up conversation, feedback becomes dialogic in a deeper sense. Students can respond, query, reinterpret.

This resonates with Freire’s insistence on dialogue as the foundation of emancipatory education. It also aligns with bell hooks’ vision of engaged pedagogy in Teaching to Transgress, where teaching and learning are relational acts rather than one-way transmissions.

When we speak with students rather than at them, feedback becomes less about surveillance and more about growth. Voice, literal voice, reintroduces presence into assessment by (re)humanizing it.

The standardization question

The resistance I encountered in 2015 revolved around standardisation. Written comments were seen as stable, recordable, and therefore fair. Audio feedback, even though stored and retrievable, was viewed as potentially variable.

But here is the uncomfortable truth: standardization is not synonymous with justice.

Critical and decolonial scholars have long questioned whose norms assessment criteria encode. Ngũgĩ wa Thiong'o, in Decolonising the Mind, reminds us that language and evaluation are never neutral; they are embedded within colonial power structures. Similarly, scholars of antiracist pedagogy argue that assessment practices often privilege dominant linguistic and epistemic norms and performances.

Audio feedback can surface some of this hidden curriculum. It allows educators to unpack what we mean by “criticality” or “coherence” in accessible, responsive ways. It can soften deficit framings by conveying nuance and care. It can make tacit expectations explicit.

For neurodivergent students, multilingual students, or those unfamiliar with disciplinary conventions, hearing feedback, with tone and pacing, can support comprehension in ways that dense written comments may not.

Uniform delivery formats may be easier to audit. But equity sometimes requires responsiveness.

Reflective practice and professional identity

Coward’s framing of verbal feedback emerged from reflective practice, a concept often associated with Donald Schön and his work The Reflective Practitioner. Reflection is not merely about improving technique; it is about interrogating the assumptions that underpin our actions.

Looking back, I can see that my 2015 experience exposed a tension between two logics and a clash of paradigms:

Assessment as pedagogical relationship / Was feedback a compliance mechanism or a pedagogical relationship?

Assessment as quality assurance infrastructure / Was my role to produce defensible documentation or to cultivate understanding?

The digital tool itself was neutral. It could host text or voice. The debate was about what counted as legitimate academic labor and legitimate evidence of fairness.

Reflective practice asks us to interrogate not only how we teach, but why certain practices are normalized while others are treated as suspect.

(Re)humanizing assessment in digital spaces

In my current work, including conversations around decolonizing curricula and rethinking assessment, I often return to a simple question:

What would assessment look like if we centered humanity rather than auditability?

This is not an argument to abandon rigor or documentation. Rather, it is a call to re-balance priorities.

(Re)humanizing assessment might include:

Dialogic feedback conversations alongside written summaries

Audio or video feedback that conveys tone and relational presence

Opportunities for students to respond to feedback

Co-constructed criteria discussions

Assessment designs that value multiple ways of knowing

These moves resonate with broader critical pedagogical commitments: resisting neoliberal metrics, challenging deficit framings, and recognizing students as co-participants in knowledge production. These moves further resonate with critical pedagogy’s insistence on dialogue, with antiracist commitments to challenging hidden norms, and with decolonial calls to unsettle inherited hierarchies of knowledge.

They also align with emerging scholarship on compassionate pedagogy and relational assessment cultures within higher education.

“Hearing someone talk about what you’ve done, the tone and voice to highlight praise, concern, and you can add in a more questioning tone ... They loved it. They loved it because they could hear from my touch. ”

An epiphany, years later: are our systems human enough?

Listening to Coward describe her practice, I felt both affirmed and reflective. The surprise expressed by the podcast interviewer revealed how deeply entrenched written, standardized feedback remains. Yet the fact that such practices continue to surface across disciplines, from medical education to the humanities, suggests a quiet shift. I also felt less concerned with whether verbal feedback is innovative and more interested in what it reveals. What I once framed defensively as “innovative feedback” now feels more clearly like a small act of resistance against depersonalized academic systems.

Even when captured and archived in a digital platform, voice unsettles the fantasy that assessment can be entirely standardised and neutral. It reintroduces tone, care, and relational accountability.

Perhaps the question is not whether audio feedback can be moderated.

Perhaps the more urgent question is whether our assessment cultures allow space for humanity, for dialogue, for nuance, for recognition.

If critical, antiracist, and decolonial pedagogies ask us to re-centre people rather than processes, then even something as simple as attaching a recorded voice note to a script can become a quietly radical act.

Suggested further reading

Pedagogy of the Oppressed – Paulo Freire

Teaching to Transgress – bell hooks

Decolonising the Mind – Ngũgĩ wa Thiong'o

A Handbook of Reflective and Experiential Learning – Jennifer A. Moon

The Reflective Practitioner – Donald Schön

And, of course, I would recommend listening to Educatalks: Reflective Practice featuring Melaine Coward, not because verbal feedback is revolutionary, but because reflective conversations about practice remind us that teaching is, at its heart, relational work.

In a sector increasingly governed by metrics, that reminder feels quietly radical.

Experimenting with generative AI: (re)designing courses and rubrics

In this post, I share some ideas for (re)creating courses and assessment rubrics as well as getting ideas for creative assessments using generative AI.

Experimenting for creating a course

I tried out Google Bard and chatGPT 3.5 to design courses and rubrics. In each case, being specific about what I wanted to see created was key. What this means is that when you are creating your prompt or query, you should be specific in terms of:

Context: e.g. state who you are or who you imagine yourself to be when creating the prompt

Audience: who is the audience of what you want to create? Students? Staff? Administrators? Management? The Public?

Purpose: in brief terms, what do you want to achieve?

Scope: similar to context, however, I see this as more focused, so ‘create a university level course on sociology’ is fine, but narrowing it down to ‘Year 1, Year 2’ etc. will focus the prompt and subsequently generate examples more tightly.

Length: it’s always helpful to state the length of the proposed course or output. For example, are you asking for a draft of a 12-week course? A two-page maximum syllabus? A three-paragraph summary?

For this example, I used the following prompt…

I am a lecturer who teaches university-level chemistry. I wish to create a new course on inorganic chemistry for Year 2 university students. The course should be 12 weeks long and have 4 assignments. What might this look like?

Below are two GIFs showing chatGPT and Google Bard respectively.

NB: You may wish to select the images to see a larger version.

Brief reflections

I used a similar prompt for both generative AI tools. I decided to add an element of creativity when so I slightly changed the prompt when using Google Bard to get it to suggest creative assessments. I then went back to chatGPT to ask it do also suggest ideas for creative assessments within the context of this course.

They seem to produce similar results regarding this particular prompt. Both suggest an outline of a suggested course on inorganic chemistry; while Google Bard integrates the creative assessments into some of the topics, chatGPT predictably creates a list of suggested creative assessments as I had asked it after the initial prompt.

Interestingly, Google Bard also expands a bit at the end of the outline with further examples of non-written, creative assessments. chatGPT, on the other hand, does give some examples of ways of supporting learning and teaching after creating an example course outline. The creative assessments it lists are similar to those of Google Bard, although they are different, such as the quiz show example among others.

For transparency, I do not teach chemistry nor have I taught it. I have, however, supported those learning chemistry with their academic writing abilities, including writing lab reports and researching the topic. On the surface, the course looks coherent. However, I will leave that to those who teach chemistry!

What you can do

To replicate what I’ve done, copy and paste the prompt into your generative AI tool of choice.

Please note: you’ll likely get a slightly different response. I did not test each response again. That said, Google Bard automatically offers additional draft examples.

Creating assessment rubrics

Educators are often handed marking rubrics with little chance to develop or create their own. What this means is that when it comes to creating an assessment rubric, some educators may not have practical experience beyond what they have observed. In this case, generative AI can provide ideas and food for thought. This can be especially helpful for getting ideas for creative assessments that are still valid and rigorous while offering a suitable alternative to traditional assessments.

I ask generative AI tools to create assessment rubrics in the examples below. Remember: you need to give generative AI a context (e.g. you’re a lecturer teaching X), a specific request (e.g. you want to create an assessment rubric) and ensure the request has specific parameters (e.g. you provide your specific criteria for this rubric) .

I am a lecturer. I wish to create a marking rubric for an essay-based assessment. The rubric should include the following criteria: criticality, academic rigor, references to research, style and formatting.

NB: You may wish to select the images to see a larger version.

Reflections

In both cases, I state my (imagined) role and the type of assessment I usually employ and ask the tools to suggest ideas with specific criteria included. In both cases, each generative AI tool creates a sample rubric based upon what I have asked it.

Both tools create a table I would expect an assessment rubric to look like. Each table includes the criteria and sample grade bands with descriptor text that cross-references to the criteria. What both generally do well with is providing some sample descriptor text. However, you will need to tweak, modify and/or change the criteria to your specific, local context.

Creating rubrics specific to your institution

If your institution has a general, overarching rubric often used, you can get generative AI to suggest sample rubrics. This may, however, be difficult given how complex your institution’s rubric may be.

In the examples below, I ask chatGPT 3.5 and Google Bard respectively to create an example rubric based on Glasgow University’s 22-point marking system. This did, however, prove difficult!

Can you change the marking scale to a 22 point scale used at the University of Glasgow?

Reflections

The prompt above initially confused both generative AI tools. This could be because a 22-point scale differs from many scales out there. This could also be because I hadn’t provided specific context of the different bands. In this case, my suggestion is to suggest that chatGPT or Google Bard create a rubric based on your marking criteria. You can then tailor the created sample rubric to your local needs.

As you can see, both tools got some areas right and others wrong.

What chatGPT did well:

it created a scale based on the criteria I provided

it included the marking bands, cross-referenced against the criteria

it included some basic descriptor text

What chatGPT can do better at:

the descriptor texts were wildly off compared with the example marking schemes

it struggled to capture the nuances between the marking bands

What Google Bard did well:

the descriptor text for each band more closely matches what I would expect to see

the marking bands are divided out nicely

the criteria are cross-referenced against marking bands

What Google Bard can do better at:

it’s hard to say what it can do better at right now given how it created a marking rubric based upon my query!

that said, the descriptor texts for each band would likely need some tweaking to match local styles

Getting ideas for creative assessments

As I noted earlier, you can use generative AI to get ideas for (more) creative assessments that aren’t traditional, written-based assignments. Traditional, written-only assignments are great for some things. However, there are other, more inclusive and creative ideas for assessments that you can use in your teaching, no matter the subject.

For this particular example, I draw upon my own area of expertise and subject area which lies at the intersections of education and sociology.

I teach a social sciences subject in university. Traditionally, we use written assessments such as essays and exams as assessments. What are some creative alternative assessments?

Reflections

In brief, similar to the first example on chemistry, both generative AI tools create a good range of creative and event collaborative assessments that you can use within your own context.

You may already use some of these, such as mind maps and portfolios. That said, there are a lot of good ideas that have been suggested that might be worth trying out. I would recommend co-creating these with students, especially if an idea appears new or innovative or out of your personal comfort zone as an educator. You may be surprised at how quickly your students take to becoming partners in learning and teaching.